Most traders assume their automated trading setup is secure once the bot is live and the API keys are locked down. That assumption is dangerous. The real vulnerabilities in automated prediction market trading are far subtler: model-level attacks that quietly erode profits, regulatory blind spots that invite enforcement action, and cascading system failures that look like normal market noise until it's too late. Adversarial attacks on deep learning models used in automated trading can degrade profitability without triggering any obvious system error. This guide walks you through the real threat landscape, practical defenses, and what compliance looks like in 2026.

Table of Contents

- Why security matters in automated trading

- Types of threats in automated trading systems

- Building secure and resilient automated trading systems

- Navigating compliance and regulatory enforcement

- Why most traders misunderstand security in automated trading

- Take the next step: Secure your automated trading

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Hidden threats | Adversarial attacks can silently reduce trading profits without noticeable errors. |

| Adaptive defenses | Dynamic controls like FDR circuit breakers outperform static methods for risk management. |

| Regulatory focus | The SEC prioritizes access controls, algorithm change monitoring, and fraud prevention in enforcement. |

| Practical resilience | Security comes from layered defenses, audit trails, and regular testing, not just one-time setups. |

Why security matters in automated trading

Automated trading has grown from a niche institutional tool into a mainstream strategy for prediction market traders. With that growth comes a much larger attack surface. The AI-driven trading market reached $27B in 2026, with algorithms handling 60 to 70% of total trading volume. That scale creates both opportunity and systemic risk.

When a vulnerability hits one automated system, it can ripple across interconnected markets. Flash crashes, sudden trading halts, and coordinated manipulation are all documented outcomes of compromised algorithmic systems. The threat isn't always external either. Internal configuration errors, poor access management, and unreviewed model updates are just as dangerous.

| Threat category | Example | Potential impact |

|---|---|---|

| Adversarial model attacks | Subtle input perturbations | Silent profit erosion |

| Cybersecurity breaches | API key theft | Unauthorized trades |

| Insider manipulation | Unauthorized model edits | Fraud, regulatory action |

| Cascading failures | Correlated bot behavior | Flash crashes, halts |

Here's what makes prediction markets particularly exposed:

- Thin liquidity means even small manipulations can move prices significantly

- Event-driven markets create predictable windows that attackers can exploit

- Decentralized infrastructure reduces centralized oversight and response speed

- Rapid bot deployment often outpaces security review cycles

"Benchmarks show AI-driven systems generate 23% higher returns on average, but systemic vulnerabilities scale alongside those gains."

Understanding AI trading bots and how they interact with market structure is the first step toward identifying where your exposure actually lives. The role of AI in prediction markets continues to expand, and so does the need for proactive security thinking.

Types of threats in automated trading systems

After outlining what's at stake, let's break down where systems are most exposed. Threats in automated trading fall into a few distinct categories, and knowing the difference helps you prioritize your defenses.

Adversarial model attacks are among the least visible and most damaging. Adversarial perturbations on deep learning models can degrade model performance without triggering obvious technical errors. Your bot keeps running, logs look normal, but returns quietly deteriorate over days or weeks.

Conventional cyber threats include API key exposure, phishing, and unauthorized access to trading accounts. These are more familiar but still widely underestimated in prediction market contexts.

| Threat type | Detection difficulty | Typical damage |

|---|---|---|

| Adversarial model attack | High | Gradual profit loss |

| API key compromise | Medium | Immediate unauthorized trades |

| Insider model changes | Medium | Fraud, compliance violations |

| Cascading bot failure | Low to medium | Market disruption |

Here's a numbered list of steps to audit your system exposure:

- Review access logs weekly for any unauthorized logins or API calls

- Audit algorithm version history to confirm no unauthorized changes were made

- Test model outputs against baseline benchmarks after any update

- Simulate adversarial inputs to measure model robustness

- Check third-party integrations for outdated permissions or deprecated endpoints

SEC enforcement actions specifically cite unauthorized model changes and weak access controls as leading sources of trading fraud. Regulators are not just watching for obvious manipulation. They're looking at whether your system architecture makes manipulation possible in the first place.

For traders using automated prediction markets, this means your security posture is also your compliance posture. They're not separate concerns.

Pro Tip: Always log every algorithm change with a timestamp, author, and reason. This single habit can protect you in both security audits and regulatory reviews.

Building secure and resilient automated trading systems

With a map of the risks, securing your system requires practical tools and strategies. The most common mistake traders make is relying on static controls, fixed thresholds, and one-time security reviews that don't adapt as markets change.

Static thresholds often fail in dynamic markets. Adaptive statistical methods, including FDR-controlled (false discovery rate) circuit breakers, provide significantly better anomaly detection because they adjust to changing market conditions rather than assuming a fixed baseline.

Here's what a layered security approach looks like in practice:

- Access control: Use role-based permissions so only authorized users can modify bot logic or access API credentials

- Audit trails: Maintain persistent, tamper-evident logs of all system changes, trades, and access events

- Adaptive circuit breakers: Implement FDR-controlled thresholds that flag anomalies relative to current market volatility

- Model monitoring: Track live model outputs against historical benchmarks to catch silent performance degradation

- Encryption: Encrypt all data in transit and at rest, including API keys and strategy parameters

- Incident response plan: Define clear steps for what happens when an anomaly is detected

Layered defenses consistently outperform single-point solutions. A strong password alone won't protect you if your model is being manipulated at the input level. Combining access controls with model monitoring and adaptive circuit breakers creates overlapping protection that's much harder to bypass.

For traders interested in statistical arbitrage methods, security controls need to account for the speed and frequency of those strategies. High-frequency approaches amplify both the rewards and the risks of any vulnerability. Reviewing your Polymarket trading strategies with security in mind is a practical starting point.

Pro Tip: Schedule a red-team exercise every quarter. Have a trusted colleague or security professional attempt to manipulate your bot's inputs or access its controls. What they find will surprise you.

Navigating compliance and regulatory enforcement

Security is only half the challenge. Understanding and anticipating regulatory action is the other. In 2026, the SEC has sharpened its focus on algorithmic trading systems, and prediction market operators are not exempt from scrutiny.

SEC enforcement specifically targets model vulnerabilities, poor access controls, unauthorized algorithm changes, and inadequate fraud prevention. Enforcement cases have included situations where model decorrelation failures, where a bot's logic drifted from its documented strategy, led directly to fraud charges.

"Regulators are increasingly focused on whether trading systems are designed to prevent manipulation, not just whether manipulation actually occurred."

This is a critical distinction. You can be held accountable for a system architecture that enables misconduct, even if no misconduct happened yet. That means your compliance obligations start at the design stage.

Here's a practical compliance checklist for automated prediction market traders:

- Document every model change with version control and a clear audit trail

- Maintain access logs that show who accessed what and when

- Conduct regular system audits to verify that live bot behavior matches documented strategy

- Implement surveillance tools that can detect unusual trading patterns in real time

- Review third-party integrations for compliance implications, especially data feeds and execution layers

- Keep records for at least three years, as SEC investigations often look back over extended periods

Encrypted surveillance systems are becoming a standard expectation, not just a best practice. If your trading infrastructure can't produce a clear, verifiable record of its own behavior, you're already behind regulatory expectations.

Exploring the best AI trading platforms available in 2026 means evaluating them not just on features but on their built-in compliance and audit capabilities.

Why most traders misunderstand security in automated trading

Here's the uncomfortable reality: most traders treat algorithmic security as a setup task, not an ongoing discipline. They configure their bot, enable two-factor authentication, and consider the job done. That mindset is exactly what attackers and regulators count on.

True resilience isn't a feature you enable. It's a habit you build. Automation boosts efficiency by 20 to 30%, but when many traders use similar AI models, herding behavior amplifies systemic risk across the entire market. Your individual security posture affects not just your returns but market stability overall.

The traders who stay secure long-term are the ones who treat their bot as a living system. They test it, update it, audit it, and question it regularly. Static controls erode. Markets evolve. Attackers adapt. Your security strategy needs to do the same.

The changing landscape of prediction markets rewards traders who combine automation with ongoing vigilance, not those who automate and disengage.

Take the next step: Secure your automated trading

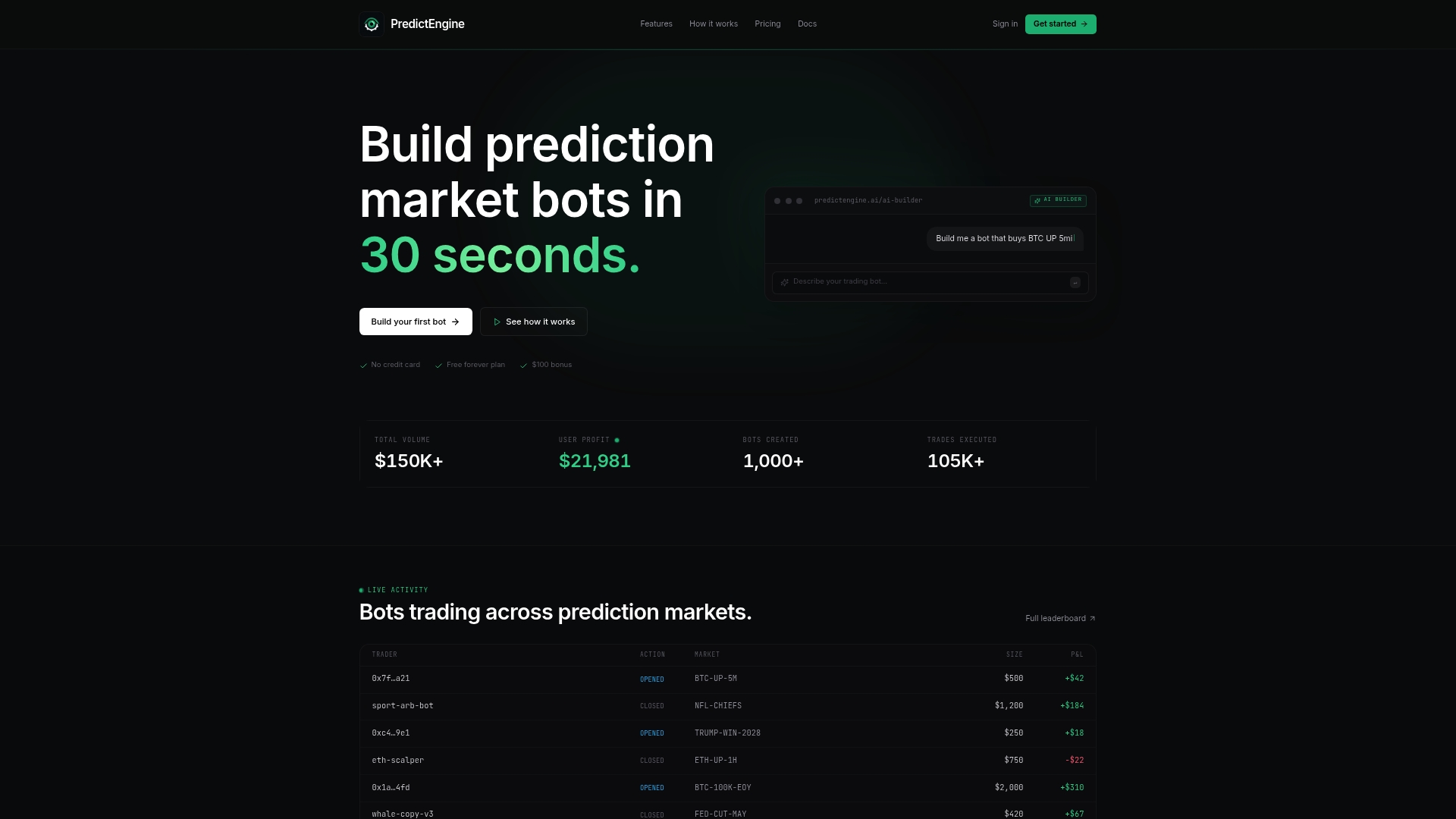

If you're ready to build a more secure and compliant automated trading setup, PredictEngine gives you the tools to do it without needing to write a single line of code.

PredictEngine's platform is built with security and compliance in mind. You get real-time monitoring dashboards, structured audit trails, and adaptive controls that align with the best practices covered in this guide. Whether you're deploying an AI trading bot for the first time or scaling up with Polymarket arbitrage strategies, the PredictEngine platform gives you the infrastructure to trade with confidence. Start building smarter, safer bots today.

Frequently asked questions

What is the biggest security challenge in automated trading?

Adversarial model attacks are among the hardest to detect because they degrade trading performance gradually without triggering obvious system errors or alerts.

How can I protect my trading bot from manipulation?

Use detailed access controls, log all algorithm changes, and apply adaptive safeguards like FDR circuit breakers. SEC guidance also emphasizes regular audits and documented risk management processes.

Are secure trading bots harder to use?

Not with modern platforms. Most well-designed trading bots include built-in access management and anomaly detection features that work in the background without adding friction to your daily workflow.

What regulatory bodies oversee automated trading security?

In the US, the SEC is the primary regulator, focusing on model integrity and access controls as core compliance requirements for algorithmic trading systems.